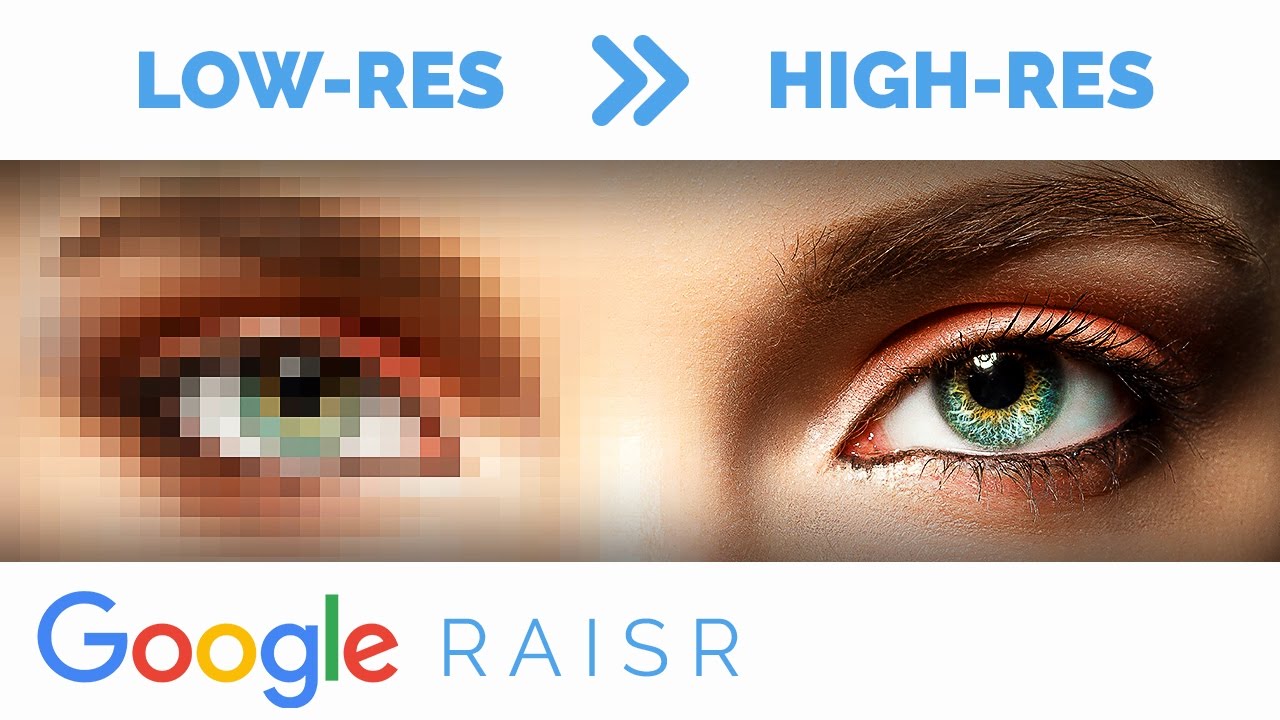

Does anyone know anything about Google Raisr? See above link for upscaling images using A.I. in development now. If this is for real, this is a game changer.

Actually RAISR ( Rapid and Accurate Image Super Resolution) is a real technology that has been around for a while now but the video exaggerates the results. It is still not adding in details that are not there already there in some way. (e.g. if the detail was smaller than one pixel on the cameras sensor or was lost in compression) It uses machine learning to improve the results. However, like the image of the guy on the cliff if the resolution is too low, there is no way for machine learning, or anything else, to tell the difference between a person, a dead tree stump, or something else. What is really happening, as machine learning indicates, the super resolution logarithm is being “trained” with hundreds of images. If there are comparative images, like the details of a butterfly wing, the machine learning should recognize it, and that will improve the results.

Anyhow you can see how it really works and compare it with other logarithms here. Low-Complexity Single-Image Super-Resolution

Here is a technical paper on the subject of super resolution. RAISR: Rapid and Accurate Image Super Resolution | IEEE Journals & Magazine | IEEE Xplore

That is one excellent answer. Thank you.

I was wondering how this will effect image reproduction and color matching. The A.I. algorithms would have to “produce” colors somehow to sharpen the image, so would those “AI” colors be similar to an actual print file at normal size. Logic tells me there would be huge differences.

I have no idea. But where the current state of the technology is I would share your doubts about color accuracy.

Picking up on what Skribe said, there’s no way AI can extrapolate detail out of an image when suggestions of that detail are not there and it has nothing to reference to invent that detail.

Facial recognition is getting pretty good, though, so an image of a face is a good place to demonstrate the capabilities of AI to enhance images. Existing AI algorithms could easily identify that the pixelized image is a face, but it needs to invent lots of the details. For example, everyone’s eyes have things in common. They’re more or less the same shape, they have eyebrows above them composed of individual hairs, eyelashes, eyelids, irises, pupils, etc.

Since the AI algorithms can identify the eyes, it can infer that the pixelized parts of that eye correspond to actual eye parts that it knows have certain details that aren’t in the pixels. Based on what it was programmed with and what it’s learned through analyzing thousands of other eyes, it knows that the irises, for example, contain detail that isn’t in the pixelized photo, so it adds detail that corresponds to the averaged detail from similar instances that it’s encountered.

Of course, if the AI program runs into things it knows little about, it can’t just make up stuff without any kind of reference to things it’s already encountered and expect it to turn out accurately — that would require supernatural magic.

Just-B

Yeah. I agree with everything you’ve said. Thanks for taking the time to get all the details down. That’s why I’m asking because I see a lot of the problems associated with this kind of tech. Even though I’ve worked with tech companies on Quantum Computing App projects, I was an artist not a scientist, so I don’t know “how” any of this stuff works at the nuts and bolts level.

Wow! First-hand experience with the future. I’d love to be there!

Yeah, that’s what I thought too. Except since they’re building apps for the next revolution in computing without the computers that will be running it, there’s no sense of accomplishment.

People don’t realize what a huge chunk of research just got mangled because Puerto Rico is one of/if not THE global AI research center for AI and Quantum Computing. It’s certainly the US center (or was). Greg Ventor from the Human Genome Project had offices there… It’s fascinating stuff if you’re into scientific nerdistry, but the actual day-to-day was a lot of hurry up and wait. Very technical and boring. Lots of charts.

I was a physics major in college before getting sidetracked off onto design. I still have a huge interest in the stuff.

Jumping into designing app interfaces given that computing with qubits and superpositions is barely passed the theoretical and experimental stage seems a little premature.

If researchers can figure out ways to mitigate quantum decoherence issues and if society can address the scary potential of AI being used the wrong way (or even if it doesn’t), the world will be a vastly different place in 50–100 years than it is today. I’m not fully convinced humanity will survive the transition.

I’m enjoying the ethical debates; especially concerning “containment”.

About 3-4 years ago there was a huge AI conference down in PR. The biggest argument they had was “Do we give the AI access to the internet?” Access means faster learning but is almost assured to lead to loss of control. In other words, we will have created sentience if we send it out to the internet.

Also, they don’t want the highest experimental AI’s physical base (for lack of a better word) on the mainland citing access to power grids and such.

I have no idea if these concerns are alarmist or realistic, but I’ll trust the smart guys to talk it out. It’s amazing and crazy and will probably be the end of human existence eventually lol. In fact theoretical physicists seem to believe that if we ever find “intelligence” on another world it will probably be artificial. They say it’s because of the exponential growth a machine “culture” can advance compared to the slow steady pace of living creatures.

You’re familiar with the paperclip maximizer example, of course.

The president of a theoretical company with advanced AI capabilities can’t find a paperclip, so he instructs a subordinate employee to make sure the company never runs out of them again. The subordinate goes overboard and programs the AI computer to never let anything get in the way of making absolutely sure there will always be plenty of paper clips.

Since the only way to be absolutely sure, is to devote all conceivable resources to making absolutely sure, the AI computer begins redirecting it’s entire learning capabilities to finding and implementing ways to ensure that paper clip production is maximized.

Following that directive, it soon begins emptying bank accounts to pay for more paperclips, it refuses to follow subsequent commands, it begins infiltrating the internet, it hijacks other computers and, essentially, uses the entire world’s resources to learn how to implement the manufacture of more and more paper clips. There’s no way to shut it down since it learns to anticipate every conceivable move anyone could make to do so. No threat to it’s prime directive is too small and society soon collapses under a sea of paperclips.

It’s a ridiculous scenario, but it points to the kinds of problems quantum computing combined with artificial intelligence could soon bring within just a few years. Society has never dealt with this sort of thing before, and I don’t know if it will be able to.

Not qualified to jump in here, but when you mentioned giving the AI access to the internet, all I could think of was how that corrupted the good intentions of Microsoft’s Tay project.

![]()

We got chatbots inventing their own language on Facebook (shut down).

We got DeepMind founder Dennis Hassabis saying that AI will need to build on neuroscience and that the company has been able to develop an AI that can “imagine” and “reason about” the future.

We got Congress passing a $1.2bn bill to ‘accellerate’ the development of quantum computing.

It’s coming, guys and

Of course they are - nothing more useless than a computer without software.

We may finally get a computer that plays a BAD game of chess . . .

Tay is a good example of AI rapidly learning and implementing inappropriate behaviors that were unanticipated by its creators. Speaking of Microsoft, Bill Gates is one of the leading voices expressing concern as we move into a world where AI will become increasingly sophisticated. But his caution is nothing compared to Elon Musk’s and Stephen Hawking’s apocalyptic statements about the impending doom of humanity once artificial intelligence takes hold.

It’s ridiculous, but it’s a good, simple example of how careful we have to be.

The rate at which a machine can learn is roughly every 2 weeks it will learn as much as the entire human race has in its entire history. That number squares itself every two weeks. So in other words, in 4 weeks an A.I. working on a quantum machine will have all the knowledge of humans multiplied by itself (not double, squared). Then 6 weeks, square that…

So once this starts, we can’t stop it. <—period.

They still have to be plugged in, right?

(he said while seeing acres of “CopperTops” on the horizon.)

Nope. The first thing an AI will do, left to its own devices, is adapt and survive. Once it’s on the internet it is no longer on any one machine. So unplugging the source doesn’t delete the A.I.

One thing we’re missing here is the difference between an Artificial Intelligence and an intelligent bot. The A.I.s that write their own programs and are in use now have every tight protocols. They can better be defined as Intelligent Bots because they have a defined outcome. They will never become “smart”. It will learn only enough to get the task done/fine tune.

The dangerous A.I.s (those without task driven instructions), will “want to live” - as part of their initial instruction set. There is nothing to stop them from defining what a “want” is, or what “to live” is by whatever information they has available to it. - ergo the internet argument.

He was at the AI meeting in Puerto Rico too. I wasn’t btw.

But that’s the part that scares me. If caution always won out and if consensus of opinion was easy to obtain and if there weren’t bad actors in the world looking out for only themselves and their interests, I wouldn’t be as worried.

As always, caution isn’t a universal virtue, mistakes are bound to happen, viewpoints vary, and there are always others willing to do irreparable harm for their own short-term gains.